Using an Apache stack for indexing your logs and metrics

In

the previous posts I talked about ELK stack for monitoring

Alfresco. But another possibility for loading metrics or logs

information, extracted by logstash, is a SOLR index server (instead of

Elastic Search), which is part of Alfresco architecture by default,

and in principle, it would seem more natural for indexing our Alfresco

logs and metrics. Besides, there exists some ports for Kibana in SOLR,

such Banana or Silk, that may be

deployed in our SOLR dedicated instance. Basically, you need the

following steps:

- Having a SOLR 6.x instance installed (http://lucene.apache.org/solr/downloads.html)

-

Create a logstash collection in SOLR for saving

extracted logs and metrics ($SOLR_HOME/bin/solr create -c logstash

-d server/solr/configsets/data_driven_schema_configs/) -

Install logstash-output-solr_http plugin for

Logstash ($LS_HOME/bin/logstash-plugin install

logstash-output-solr_http) as

described in the following post, and configure

solr_url parameter in output section for logstash.conf - Clone Banana project via git (git clone https://github.com/lucidworks/banana)

-

Deploy (copy) Banana src folder to

$SOLR_HOME/server/solr-webapp/webapp/ (you may also compile dist

folder via npm install && bower install && grunt

build – then copy dist folder instead src). -

Create a collection in SOLR for banana dashboards

in SOLR ($SOLR_HOME/bin/solr create -c banana-int).

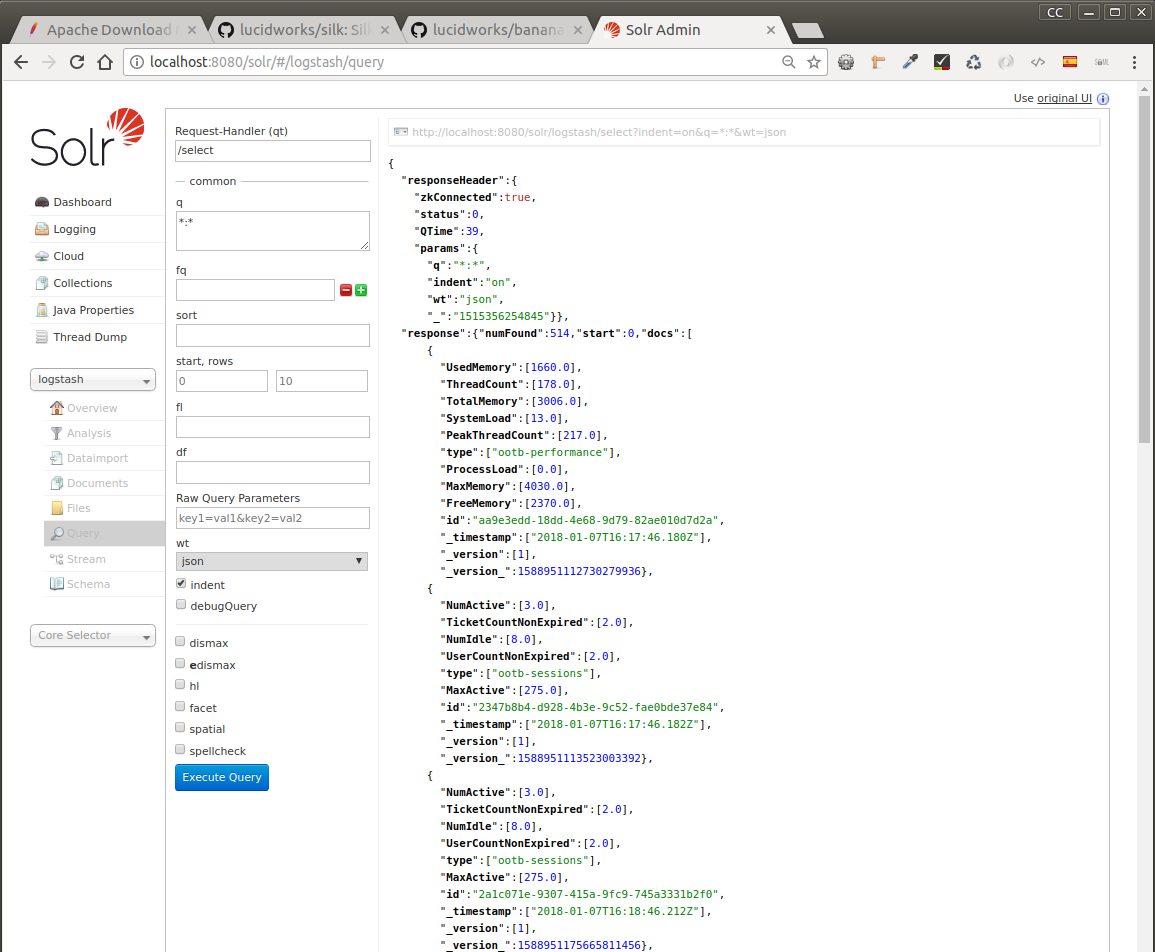

When starting your logstash process, you may check the corresponding

info in SOLR query console for logstash collection. This is part of

SOLR administration console which is a nice tool for inspecting and

managing collection config or doing search queries.

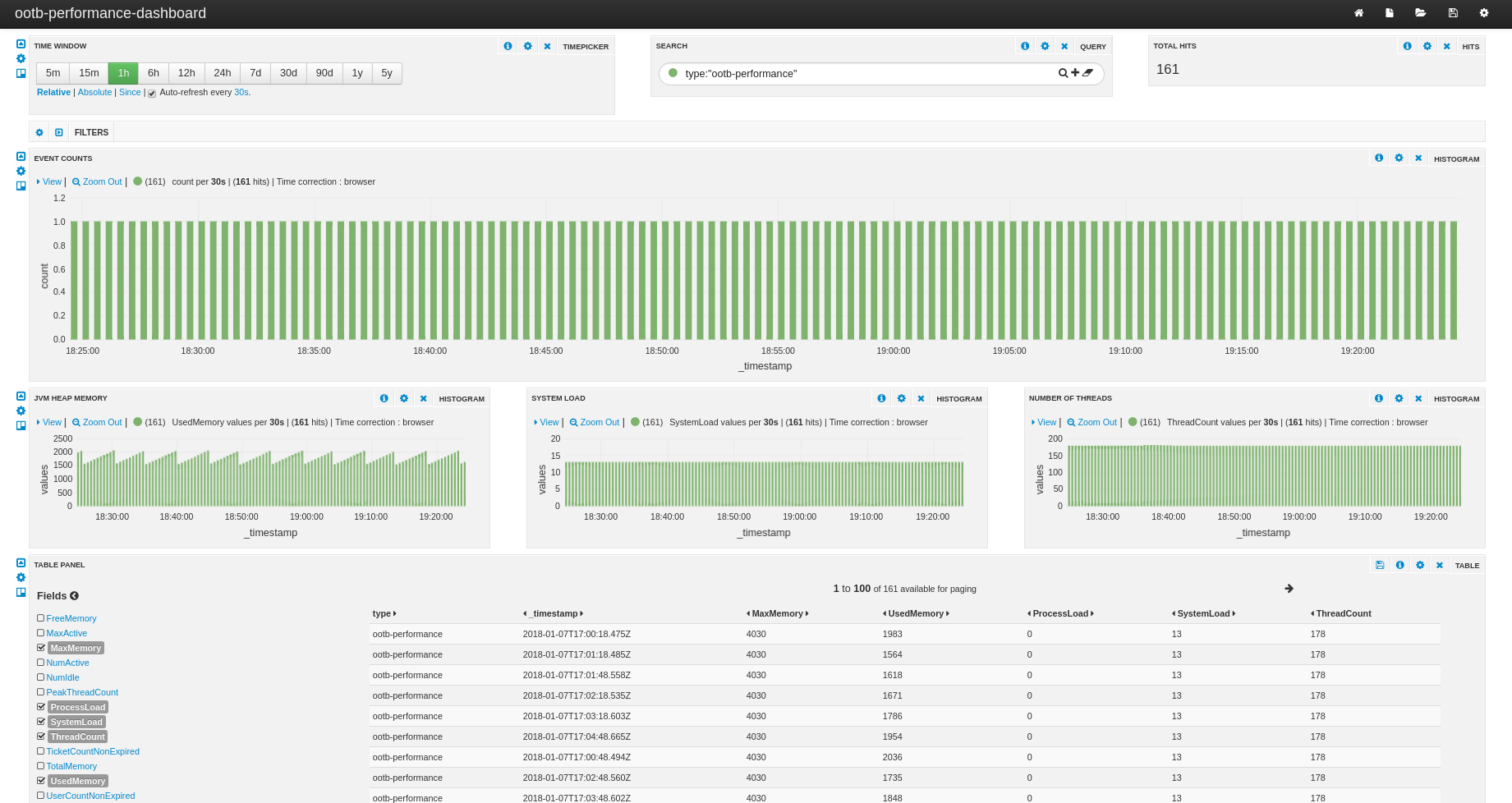

Finally, you may configure a banana dashboard for visualizing some

metrics events:

http://localhost:8080/solr/banana/

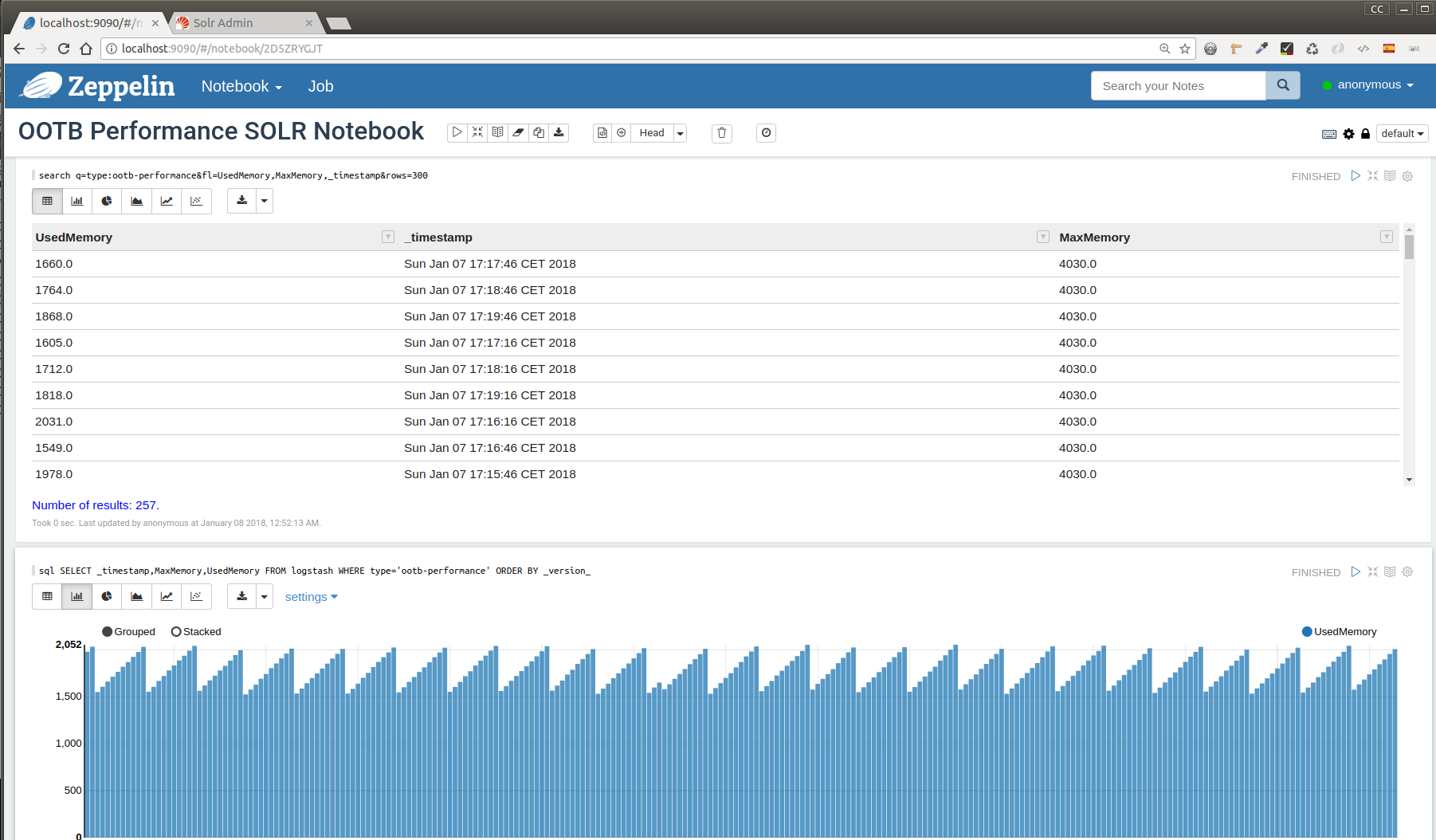

Finally, if you are working with Apache ecosystem, these SOLR

collections may also be consumed from Apache Zeppelin via

SQL. This topic was covered in past Beecon

2017 apropos SOLR features. For this, you need to install a SOLR interpreter

for Apache Zeppelin (./bin/install-interpreter.sh –name

zeppelin-solr –artifact

com.lucidworks.zeppelin:zeppelin-solr:0.0.1-beta2) and to create a new

interpreter for SOLR with the corresponding Zookeeper endpoint

(solr.zkHost). Once the interpreter is available, you can configure a

notebook and use some SQL and SOLR queries.

Links:

- https://medium.com/@sreekantht/indexing-log-files-to-solr-using-logstash-5aad3aa3dba

- https://github.com/lucidworks/banana

- https://github.com/lucidworks/silk

- https://lucene.apache.org/solr/guide/6_6/solr-jdbc-apache-zeppelin.html

- https://github.com/lucidworks/zeppelin-solr

- https://www.youtube.com/watch?v=u7pOhYRf4LY